When I talk with partners about AI, I hear a lot of questions.

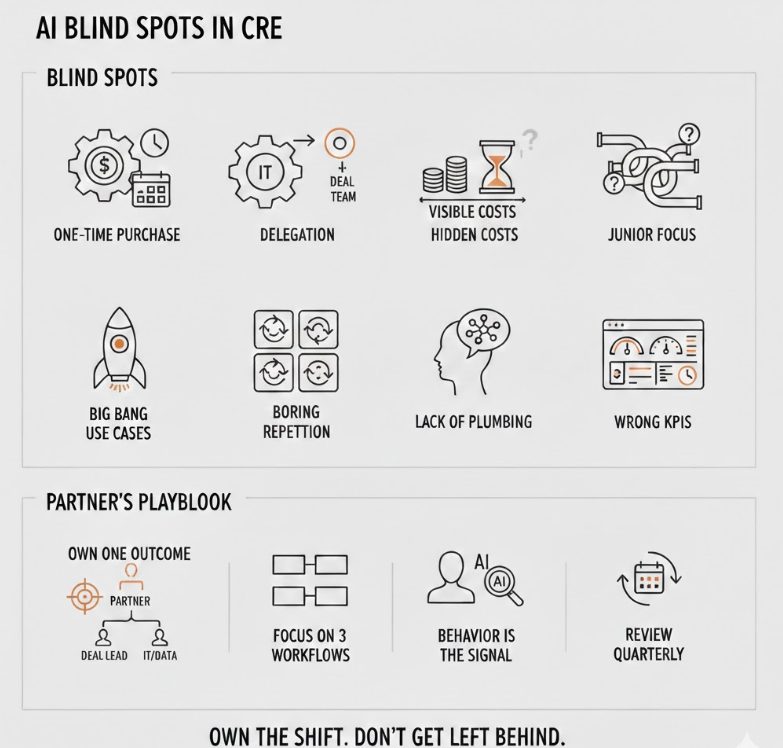

I also see the same blind spots repeat.

Most of the problems are not technical. They are about how partners think about risk, ownership, and time. If you get those wrong at the top, it almost does not matter which AI tools you pick. The initiative will stall, or worse, quietly create risk without any durable advantage.

Here are the patterns I see most often in CRE partnerships and leadership teams.

1. Treating AI As A One‑Time Software Purchase

Partners love to frame AI as:

- “We just need the right vendor.”

- “Let’s pilot a tool and see the ROI.”

That mindset works for point solutions like a new CRM plugin. It does not work for AI.

AI adoption in CRE is closer to:

- Training a new class of analysts who work differently

- Re‑wiring how information flows through your firm

You do not “install” that and move on. You invest in:

- Iterating prompts and workflows

- Capturing what works into playbooks

- Updating those playbooks as the market and tools change

When a partner says “We’ll try a tool for 90 days and decide,” what they often mean is “we are not budgeting time or attention for the learning curve.” That is not a pilot. That is theater.

2. Delegating AI To IT Or Innovation, Not To The Deal Team

Another pattern:

- AI “lives” with IT, a data group, or a single innovation champion

- The deal team watches from the sidelines

On an org chart, that looks tidy. In reality:

- IT does not live inside your IC meetings

- The data team does not underwrite deals or negotiate LOIs

- The innovation person has no authority to change how partners actually work

So you get:

- Cool demos

- A few internal decks

- No real change in underwriting speed, IC quality, or portfolio decisions

The firms that make real progress put a partner and a working deal lead in charge, with IT and data in a supporting role, not the other way around.

3. Underestimating The Cost Of Doing Nothing

Partners are good at weighing explicit costs:

- License fees

- Headcount

- Consultant hours

They are much worse at pricing the opportunity cost of waiting, even when the evidence is in front of them.

Examples of “hidden” costs:

- The 20–30 percent of analyst time still spent on tasks that could be 80 percent automated

- The missed deals that never get a serious look because your pipeline capacity is capped

- The weaker negotiating position when the other side is using AI for prep and you are not

- The compounding advantage competitors build as they capture and recycle their deal data

On a single quarterly P&L, those costs are invisible. Across three to five years, they show up as “we just seem to be losing more close calls” or “our people leave for firms that run sharper processes.”

If you are not explicitly tracking those costs, you will keep telling yourself that “waiting to see” is free. It is not.

4. Assuming AI Is “For Juniors,” Not For Partners

I hear this one a lot:

- “Our analysts should be all over this.”

- “The kids know this stuff.”

That is true and incomplete.

If AI only lives at the analyst level, you get:

- Faster decks

- Cleaner models

- No change in partner behavior

The real leverage comes when partners use AI for:

- Reading and summarizing key docs before IC

- Running alternative deal narratives in parallel

- Stress testing assumptions against actual history

- Preparing for negotiations and investor conversations

The best firms I see have partners who:

- Ask AI blunt questions they would usually save for late‑stage debates

- Use AI output live in meetings as a neutral “third voice” that forces clarity

- Model that behavior for the rest of the team

5. Chasing Big Bang Use Cases Instead Of Boring Repetition

Partners love a marquee use case:

- “Can we use AI to underwrite deals automatically?”

- “Can we predict the next breakout submarket?”

Those are interesting questions, but they are not where the early returns live.

The best returns come from boring work that repeats every day:

- Intake and triage of new deals

- First‑pass OM and lease abstraction

- Drafting and formatting IC memos

- Standard DD requests and follow‑up email flows

If you insist on a “transformational” use case before you invest, you skip the part where AI proves itself on:

- Time saved

- Fewer errors

- Higher deal throughput

You also deprive your team of the on‑ramp they need to trust AI on more complex work later.

6. Ignoring The Plumbing: Data, Templates, And Governance

Many partners want to talk strategy, not plumbing. They ask:

- “What can AI do for our investment thesis?”

and skip:

- “Where do we actually store models, memos, and outcomes?”

- “Are our templates consistent enough for AI to read them?”

- “Who is allowed to plug what data into which tools?”

Without plumbing, you get:

- Random tools pulling from half‑broken folders

- Sensitive data pasted into public models by mistake

- No way to reuse or audit what AI produced

Partners underestimate how much structure they need to provide:

- One clean folder or system of record for deals

- Standardized templates for IC, DD, and asset reports

- Simple rules for what can and cannot leave your environment

This is not glamorous work, but it is what separates “we played with AI” from “AI is now part of how this firm thinks.”

7. Measuring AI With The Wrong KPIs

Here is a common pattern:

- Partner: “We tried AI on IC memos. It did not save us 50 percent of the time, so we dropped it.”

What they measured:

- Hours saved on one task in isolation

What they did not measure:

- How many more deals the team could evaluate with the same headcount

- How much faster they could turn questions from IC

- How many small mistakes vanished because AI acted as a second reader

If you evaluate AI only on direct time savings, you will kill good initiatives prematurely.

Better questions for partners:

- “Did AI meaningfully increase the number of high‑quality decisions we can make per quarter?”

- “Did it reduce our dependence on a few key people who know where everything lives?”

- “Did it improve the audit trail behind our decisions?”

8. Treating AI Adoption As Optional Culture, Not Core Strategy

Finally, the biggest miss:

Partners often talk about AI in the same tone they use for wellness perks or standing desks. Nice to have. Good optics.

But AI is quickly becoming:

- The way information is organized

- The way institutional knowledge is preserved

- The way patterns in your deals are surfaced and debated

If that lives only in pockets and side projects, you end up with a two‑class firm:

- A small group who gets faster and sharper

- A larger group stuck in legacy workflows

That culture does not hold. The sharp group either leaves or tunes out. The rest dig in.

The firms that are getting this right treat AI adoption as:

- A partner‑level priority

- A standing item in IC, not a one‑off agenda topic

- Part of how they evaluate leadership and team performance

What Partners Should Be Doing Instead

If you are a partner and recognize yourself in any of this, here is a practical reset.

- Own one concrete AI outcome this year

Not a pilot, not a committee. A real outcome, such as:- “Reduce average IC cycle time by 30 percent without losing rigor.”

- “Standardize and partially automate OM and rent roll intake for 100 percent of deals we see.”

- Put a deal person in charge, with IT support

- One partner

- One VP or director who actually runs deals

- IT and data as enablers

- Start with three boring workflows that repeat every week

- Do not chase the biggest use case first

- Prove value in the work your team repeats

- Make your own behavior the signal

- Use AI yourself in prep, IC, and negotiations

- Talk about where it helped and where it fell short

- Ask for better from your tools and your team, in public

- Review and reset quarterly

- What did we automate or augment

- What did we learn

- Where did we see real impact on pipeline, decisions, or risk

Partners do not need to become prompt‑engineering experts.

You do need to stop treating AI as someone else’s problem and start owning how it will change the way your firm sees deals, makes decisions, and competes.

Because your competitors’ partners are already doing that, whether they talk about it on panels or not.

Frequently Asked Questions About AI Blind Spots CRE Partners Need To Fix

AI adoption in CRE is closer to training a new class of analysts who work differently and re-wiring how information flows through your firm. You do not install it and move on. You invest in iterating prompts and workflows, capturing what works into playbooks, and updating those playbooks as the market and tools change. A 90-day trial with no budget for time or attention is not a pilot. It is theater.

IT does not sit inside your IC meetings. The data team does not underwrite deals or negotiate LOIs. When AI lives only with IT or an innovation champion, you get cool demos and internal decks but no real change in underwriting speed, IC quality, or portfolio decisions. The firms making real progress put a partner and a working deal lead in charge, with IT and data in a supporting role.

Partners are good at weighing explicit costs like license fees and headcount but much worse at pricing opportunity cost. Hidden costs include 20 to 30 percent of analyst time still spent on automatable tasks, missed deals that never get a serious look because pipeline capacity is capped, weaker negotiating positions when the other side uses AI for prep, and the compounding advantage competitors build as they capture and recycle their deal data. Across three to five years those costs show up as lost close calls and higher turnover.

If AI only lives at the analyst level, you get faster decks and cleaner models but no change in partner behavior. The real leverage comes when partners use AI for reading and summarizing key documents before IC, running alternative deal narratives in parallel, stress testing assumptions against actual history, and preparing for negotiations and investor conversations. Partners who model that behavior set the tone for the rest of the team.

The best early returns come from work that repeats every day: intake and triage of new deals, first-pass OM and lease abstraction, drafting and formatting IC memos, and standard DD requests. If you insist on a transformational use case before you invest, you skip the part where AI proves itself on time saved, fewer errors, and higher deal throughput. You also deprive your team of the on-ramp they need to trust AI on more complex work later.

Without structure, you get random tools pulling from half-broken folders, sensitive data pasted into public models by mistake, and no way to reuse or audit what AI produced. Partners need to provide one clean system of record for deals, standardized templates for IC and DD and asset reports, and simple rules for what can and cannot leave the environment. This is not glamorous work, but it separates playing with AI from making AI part of how the firm thinks.

If you evaluate AI only on direct time savings on a single task, you will kill good initiatives prematurely. Better questions include: Did AI meaningfully increase the number of high-quality decisions we can make per quarter? Did it reduce our dependence on a few key people who know where everything lives? Did it improve the audit trail behind our decisions? Those metrics capture the compounding value that simple hours-saved calculations miss.

AI is quickly becoming the way information is organized, institutional knowledge is preserved, and patterns in your deals are surfaced and debated. If it lives only in pockets and side projects, you end up with a two-class firm where a small group gets faster and sharper while the rest stays stuck in legacy workflows. The firms getting this right treat AI adoption as a partner-level priority, a standing item in IC, and part of how they evaluate leadership and team performance.

Own one concrete AI outcome this year, such as reducing average IC cycle time by 30 percent or standardizing OM and rent roll intake for all deals. Put a deal person in charge with IT support. Start with three boring workflows that repeat every week. Use AI yourself in prep, IC, and negotiations. Talk about where it helped and where it fell short. Review and reset quarterly on what you automated, what you learned, and where you saw real impact on pipeline, decisions, or risk.